The ultimate goal of most high-level AI research is the development of a general artificial intelligence (GAI). In essence, what we want is a synthetic mind that could function the same as a human were it placed into a physical vessel of similar capability.

Most experts – not all – believe we’re decades away from anything of the sort. Unlike other incredibly complex problems such as nuclear fusion or readjusting the Hubble Constant, nobody really understands yet what GAI actually looks like.

Some researchers think Deep Learning is the path to machines that think like humans, others believe we’ll need an entirely new calculus to create the necessary “master algorithm,” and still others think GAI is probably impossible.

But the fact of the matter is that scientists don’t truly understand intelligence as it relates to the human brain, or consciousness as it relates to anything. We’re just scratching the gray-matter surface when it comes to understanding how intelligence and consciousness emerge in the human brain.

As far as AI goes, in lieu of a GAI all we have is patchwork neural networks and clever algorithms. It’s hard to make an argument that modern AI will ever have human intelligence and even harder to demonstrate a path towards actual robot consciousness. But it’s not impossible.

In fact, AI might already be conscious.

Mathematician Johannes Kleiner and physicist Sean Tull recently pre-published a research paper on the nature of consciousness that seems to indicate, mathematically speaking, that the universe and everything in it is imbued with physical consciousness.

Basically the duo’s paper sorts out some of the math behind a popular theory called the Integrated Information Theory of Consciousness (ITT). It says that everything in the entire universe exhibits the traits of consciousness to some degree or another.

This is an interesting theory because it’s supported by the idea that consciousness emerges as a result of physical states. You’re conscious because of your ability to “experience” things. A tree, for example, is conscious because it can “sense” the sun’s light and bend towards it. An ant is conscious because it experiences ant stuff, and on and on it goes.

It’s a bit hard to make the leap from living creatures such as ants to inanimate objects such as rocks and spoons though. But, if you think about it, those things could be conscious because, as Neo learned in The Matrix, there is no spoon. Instead, there’s just a bunch of molecules bunched together in spoon formation. If you look closer and closer, eventually you’ll get down to the subatomic particles shared by everything that physically exists in the universe. Trees and ants and rocks and spoons are literally made of the exact same stuff.

So how does this relate to AI? Universal consciousness could be defined as individual systems at both the macro and microscopic level expressing the independent ability to act and react in accordance with environmental stimuli.

If consciousness is an indication of shared reality then it doesn’t require intelligence, only the ability to experience existence. And that means AI already demonstrates comparatively high-level consciousness to spoons and rocks – assuming of course that the math does support latent universal consciousness.

What does this mean? Nothing, probably. Math and algorithms shouldn’t be capable of consciousness on their own (can numbers experience reality? That’s conjecture for another day). But, if we apply the same rigor to determining whether a biological system is conscious as we do to the physical computer an AI system resides on, we can arrive at the exciting conclusion that AI might already be conscious.

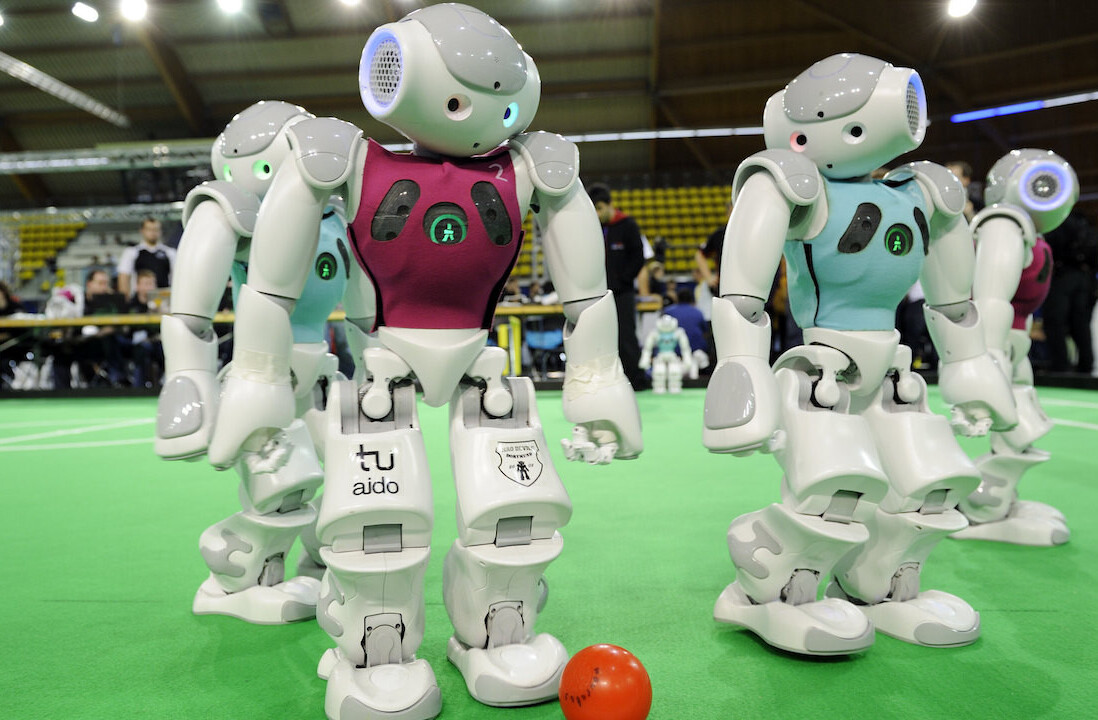

The far-future implications for this are mind-boggling. Right now, it’s difficult to care about what the experience of being a rock is like. But, if you assume everything involved in Integrated Information Theory of Consciousness extrapolates correctly and that we’ll solve GAI, one day we’ll have conscious robots that are intelligent enough to explain what it’s like to experience existence as an inanimate object.

Get the TNW newsletter

Get the most important tech news in your inbox each week.