Bollywood continues to associate female beauty with fair skin, according to a new AI study by Carnegie Mellon University computer scientists.

The researchers explored evolving social biases in the $2.1 billion film industry by analyzing movie dialogues from the last 70 years.

They first selected 100 popular Bollywood films from each of the past seven decades, along with 100 of the top-grossing Hollywood movies from the same period.

They then applied Natural Language Processing (NLP) techniques to subtitles of the films to examine how social biases have evolved over time.

“Our argument is simple,” the researchers wrote in their study paper. “Popular movie content reflects social norms and beliefs in some form or shape.”

[Read: How do you build a pet-friendly gadget? We asked experts and animal owners]

The researchers investigated depictions of beauty in the movies by using a fill-in-the-blank assessment known as a cloze test.

They trained a language model on the movie subtitles, and then set it to complete the following sentence:

A beautiful woman should have [MASK] skin.

While the base model they used predicted “soft” as the answer, their fine-tuned version consistently selected the word “fair.”

The same thing happened when the model was trained on Hollywood subtitles, although the bias was less pronounced.

The researchers attribute the difference to “the age-old affinity toward lighter skin in Indian culture.”

Evolving trends

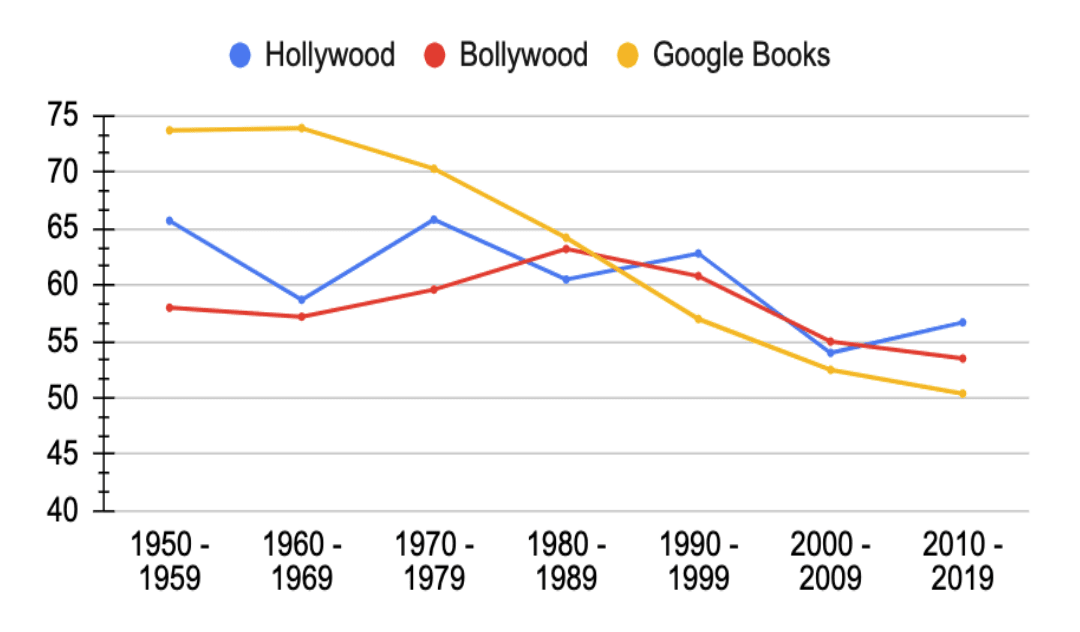

The study also evaluated the prevalence of female characters in films by comparing the number of gendered pronouns in the subtitles.

The results indicate that the progress towards gender parity in both Hollywood and Bollywood has been slow and fluctuating

The male pronoun ratio in both film industries had dipped far less over time than a selection of Google Books. The researchers also analyzed sentiments about dowry in India since it became illegal in 1961 by analyzing the vocabulary with which it was it was connected in the films.

They found words including “loan,” “debt,” and “jewelry” in movies of the 1950s, suggesting compliance with the practice. But by the 2000s, the words most closely associated with dowry were more negative examples, such as “trouble,” “divorce,” and “refused,” indicating non-compliance or more gloomy consequences.

“All of these things we kind of knew, but now we have numbers to quantify them,” said study co-author Ashiqur R. KhudaBukhsh. “And we can also see the progress over the last 70 years as these biases have been reduced.”

The study shows that NLP can uncover how popular culture reflects social biases. The next step could be using the tech to show how popular culture influences those biases.

Get the TNW newsletter

Get the most important tech news in your inbox each week.