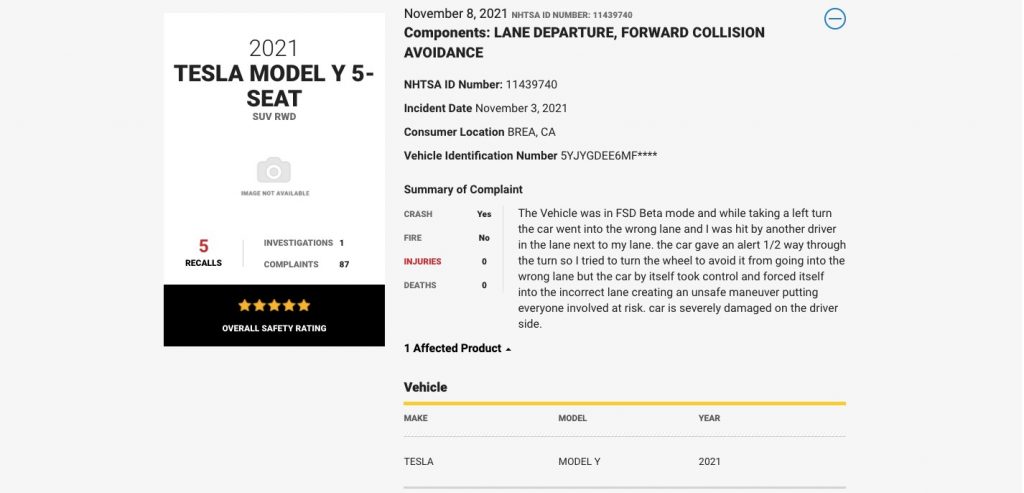

An anonymous owner of a 2021 Tesla Model Y has filed a complaint at the National Highway Traffic Safety Administration (NHTSA), reporting their vehicle crashed while the Full Self-Driving Beta was engaged.

The accident took place on November 3, somewhere in Brea, California. Fortunately, there were no injuries or fatalities, but disturbingly enough, the owner blames the FSD for causing the crash. Specifically, the car kept entering the wrong lane and didn’t respond to driver input.

This is the driver’s statement on NHTSA’s official page:

The Vehicle was in FSD Beta mode and while taking a left turn the car went into the wrong lane and I was hit by another driver in the lane next to my lane.

The car gave an alert 1/2 way through the turn so I tried to turn the wheel to avoid it from going into the wrong lane but the car by itself took control and forced itself into the incorrect lane creating an unsafe maneuver putting everyone involved at risk. Car is severely damaged on the driver side.

That’s strange. And frightening, to be honest.

We’ve seen previously FSD causes Teslas to attempt to enter wrong lanes, and disengage the featur abruptly, but to withhold control of the car? That’s a first.

A driver should always be able to take control the vehicle and steer it out of a dangerous situation, and FSD is supposed to deactivate when force is applied to the wheel.

Extra worries for NHTSA

While NHTSA needs to further investigate the accident and validate the complaint (in case it’s a fake report), this incident will only add to the agency’s trust issues with Tesla software if the allegations are found to be true.

In October, Tesla recalled nearly 11,704 vehicles after identifying a software error that could cause a false forward-collision warning or unexpected activation of the automatic emergency brake system.

In its safety recall report, the agency said that Tesla “uninstalled FSD 10.3 after receiving reports of inadvertent activation of the automatic emergency braking system” and then “updated the software and released FSD version 10.3.1 to those vehicles affected.”

Seeing some issues with 10.3, so rolling back to 10.2 temporarily.

Please note, this is to be expected with beta software. It is impossible to test all hardware configs in all conditions with internal QA, hence public beta.

— Elon Musk (@elonmusk) October 24, 2021

And let’s not forget that NHTSA in August opened a probe on Tesla’s Autopilot software, citing the cars’ repeated collisions with parked first-responder vehicles.

So, how can we not mistrust Tesla’s semi-autonomous software? After all, the company itself has bluntly warned about its limitations.

Beta 9’s release notes are my favorite regarding FSD:

It may do the wrong thing at the worst time, so you must always keep your hands on the wheel and pay extra attention on the road.

The problem here is, dear Tesla, what if I can’t take control of the car in case it chooses to do “the wrong thing at the worst time?”

Get the TNW newsletter

Get the most important tech news in your inbox each week.