Clumsy robots have been offered hope of improving their coordination after MIT researchers found a new way to help them find their bearings.

The system gives soft robots a greater awareness of their movements by analysing motion and position data through a ‘sensorized’ skin.

It works by collecting feedback from sensors on the robot’s body. A deep learning model then analyses the data to estimate the robot’s 3D configuration.

[Read: Scientists used stem cells to create a new life-form: Organic robots]

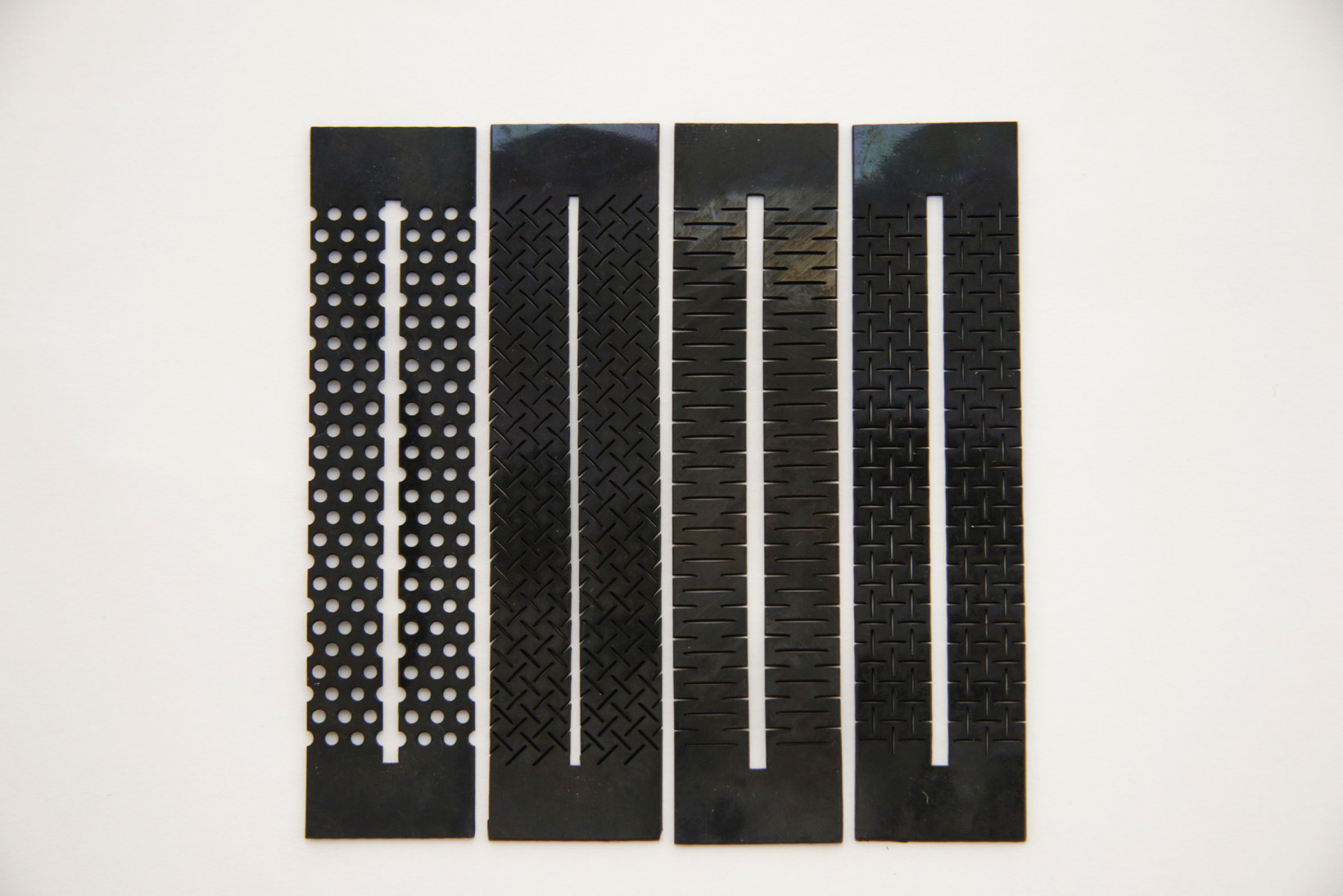

The sensors are comprised of conductive silicone sheets, which the researchers cut into patterns inspired by kirigami — a variation of origami that that involves cutting as well as folding paper. These patterns make the material sufficiently flexible and stretchable to be applied to soft robots.

A deep neural network then captures signals from sensors to predict the best configuration for the robot.

The system aims to overcome the problem of controlling soft robots that can move in countless direction by giving them “proprioception” — an awareness of their position and movements. It could eventually make artificial limbs better at handling objects.

Sensorized skin potential

The researchers used the system to teach an elephant trunk-shaped robot to predict its own position as it rotated and extended.

“We want to use these soft robotic trunks, for instance, to orient and control themselves automatically, to pick things up and interact with the world,” said MIT researcher Ryan Truby, who co-wrote a paper describing how the system works. “This is a first step toward that type of more sophisticated automated control.”

Truby admits that the system can not yet capture subtle or dynamic motion. But it could at least reduce the clumsiness that has embarrassed robotkind for decades.

You’re here because you want to learn more about artificial intelligence. So do we. So this summer, we’re bringing Neural to TNW Conference 2020, where we will host a vibrant program dedicated exclusively to AI. With keynotes by experts from companies like Spotify, RSA, and Medium, our Neural track will take a deep dive into new innovations, ethical problems, and how AI can transform businesses. Get your early bird ticket and check out the full Neural track.

Get the TNW newsletter

Get the most important tech news in your inbox each week.