Cooperation between people holds society together, but new research suggests we’ll be less willing to compromise with “benevolent” AI.

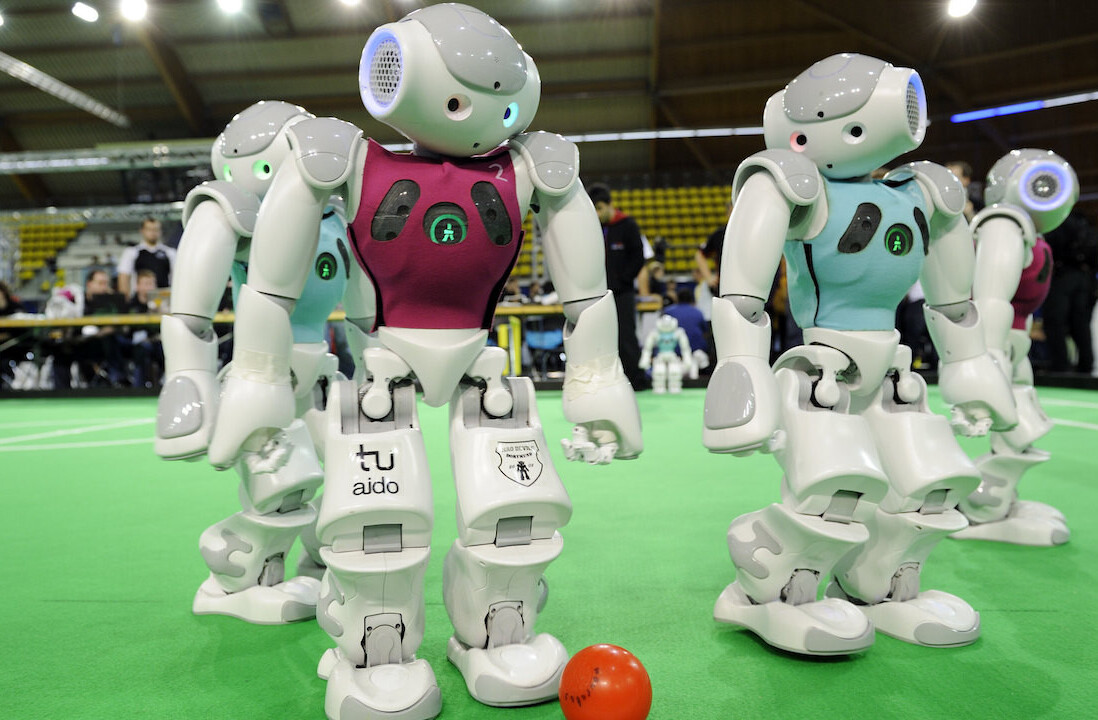

The study explored how humans will interact with machines in future social situations — such as self-driving cars that they encounter on the road — by asking participants to play a series of social dilemma games.

The participants were told that they were interacting with either another human or an AI agent. Per the study paper:

Players in these games faced four different forms of social dilemma, each presenting them with a choice between the pursuit of personal or mutual interests, but with varying levels of risk and compromise involved.

The researchers then compared what the participants chose to do when interacting with AI or anonymous humans.

[Read: Why entrepreneurship in emerging markets matters]

Study co-author Jurgis Karpus, a behavioral game theorist and philosopher at the Ludwig Maximilian University of Munich, said they found a consistent pattern:

People expected artificial agents to be as cooperative [sic] as fellow humans. However, they did not return their benevolence as much and exploited the AI more than humans.

Social dilemmas

One of the experiments they used was the prisoner’s dilemma. In the game, players accused of a crime must choose between cooperation for mutual benefit or betrayal for self-interest.

While the participants embraced risk with both humans and artificial intelligence, they betrayed the trust of AI far more frequently.

However, they did trust their algorithmic partners to be as cooperative as humans.

“They are fine with letting the machine down, though, and that is the big difference,” said study co-author Dr Bahador Bahrami, a social neuroscientist at the LMU. “People even do not report much guilt when they do.”

The findings suggest that the benefits of smart machines could be restricted by human exploitation.

Take the example of autonomous cars. If no one lets them join the traffic, the vehicles will create congestion on the side. Karpus notes that this could have dangerous consequences:

If humans are reluctant to let a polite self-driving car join from a side road, should the self-driving car be less polite and more aggressive in order to be useful?

While the risks of unethical AI attract most of our concerns, the study shows that trustworthy algorithms can generate another set of problems.

Greetings Humanoids! Did you know we have a newsletter all about AI? You can subscribe to it right here.

Get the TNW newsletter

Get the most important tech news in your inbox each week.