We’re three and a half years away from electing another president here in the US, but it’s never to early too prepare ourselves for the impending crapshow that is US politics.

The good news is, going forward, you won’t have to think so much. The age of data-based politics is coming to a close thanks to the innovations created by the 2016 Donald Trump campaign and the counteracting tactics employed by the Biden team in 2020.

Data used to be the most important commodity in politics. When Trump won in 2016, it wasn’t on the strength of his platform (he didn’t have one). It was on the strength of his data gathering and ad-targeting.

But that strategy was proven ineffective when it went up against the Biden team who, unlike Hillary Clinton’s campaign, conducted effective counter-messaging across the social media spectrum.

Data-based politics result in politicians gathering information on us against our will or knowledge. They then exploit that information to figure out what messages are likely to get people fired up.

Given an issue we’re not sure about, humans are likely to believe anything for a few moments, as long as it comes from a trusted source. And this is especially true when it comes to AI

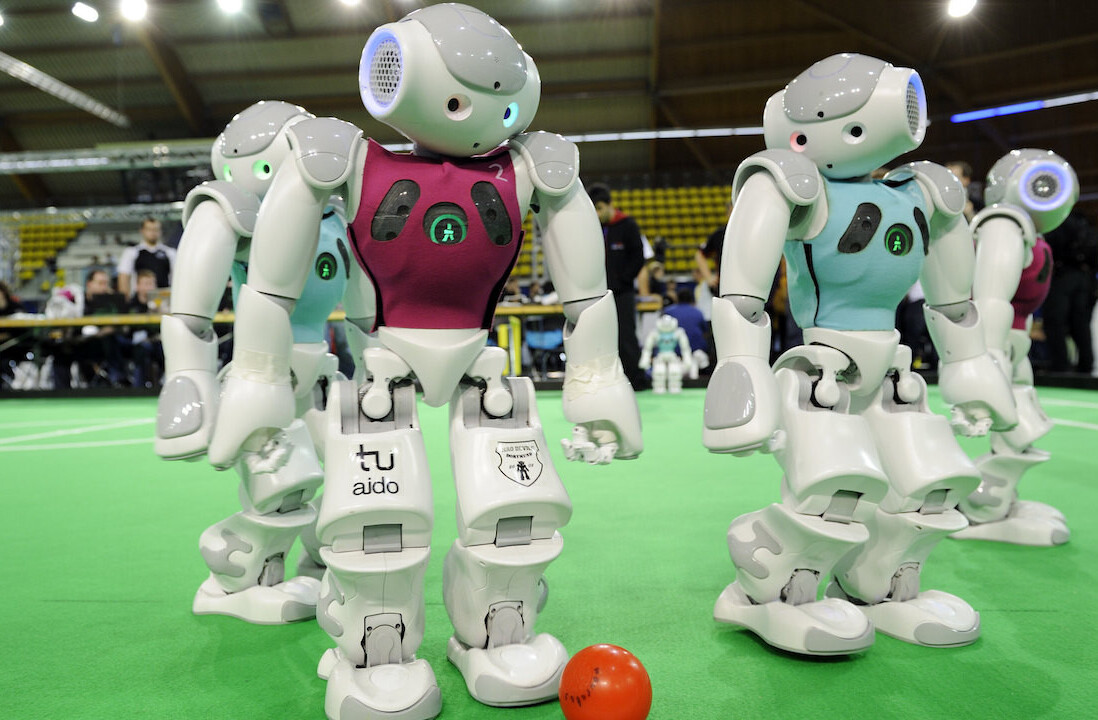

A duo of researchers from Drexel University and Worcester Polytechnic Institute recently published a study demonstrating how easily humans trust machines and each other. The results are a bit scary when you view them through the lens of political and corporate manipulation.

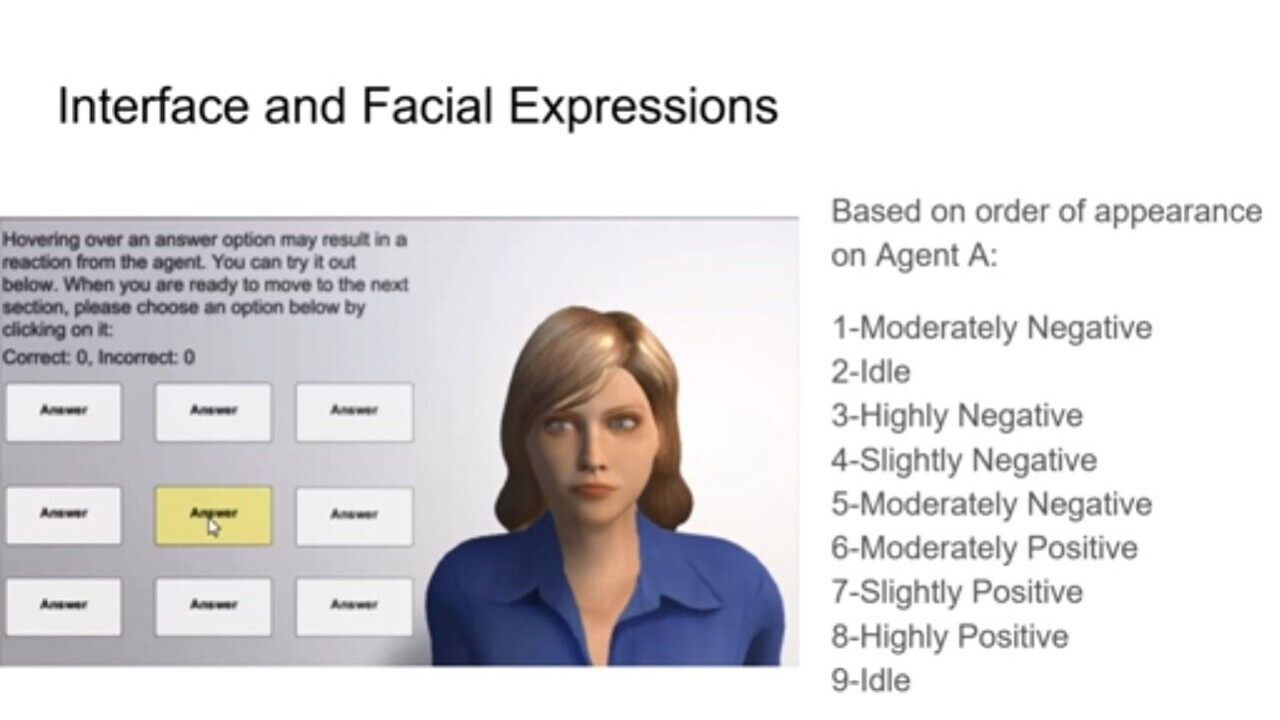

Let’s start with the study. The researchers asked groups of people to answer multiple choice questions with help of an an AI avatar. The avatar was given a human visage and animated to either nod and smile or to frown and shake its head. In this way, the AI could indicate “yes” and “no” with either mild or strong sentiment.

When users hovered their mouse over an answer, the AI would either shake its head, nod, or remain idle. Users were then asked to evaluate whether the AI was helping them or hindering them.

One group worked exclusively with a bot that was always right. Another group worked with a bot that was always trying to mislead them, and a third group worked with a mix of the two.

The results were astounding. People tend to to trust the AI implicitly at first no matter what the questions are, but they lose that trust quickly when they find out the AI was wrong.

Per Reza Moradinezhad, one of the scientists responsible for the study, in a press release:

Trust for computer systems is usually high right at the beginning because they are seen as a tool, and when a tool is out there, you expect it to work the way it’s supposed to, but hesitation is higher for trusting a human since there is more uncertainty.

However, if a computer system makes a mistake, the trust for it drops rapidly as it is seen as a defect and is expected to persist. In case of humans, on the other hand, if there already is established trust, a few examples of violations do not significantly damage the trust.

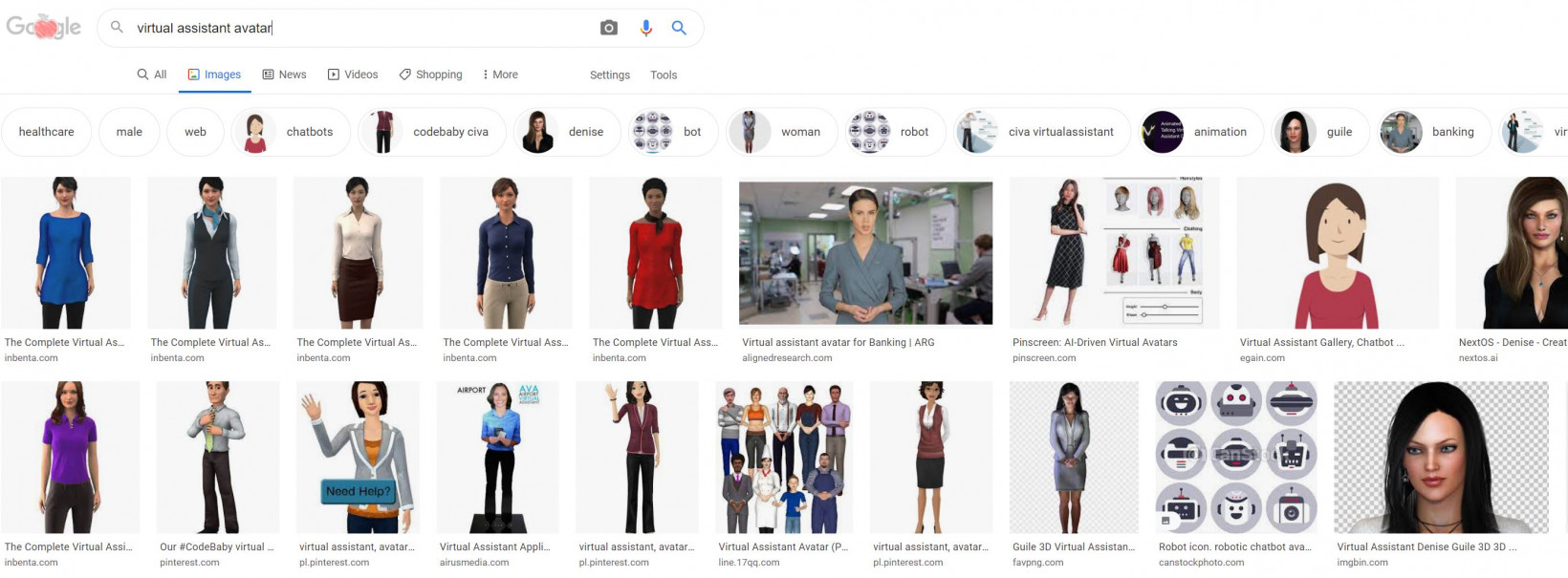

Traditionally, what this means, is that it’s smarter to find people who look trustworthy than it is to find trustworthy people. And, what does trustworthy look like?

It depends on your target audience. A Google search for “woman news anchor” makes it clear that the media has a strong bias:

And you need only glance at Congress, which is about 77 percent white and male, to understand what “trust” looks like to US voters.

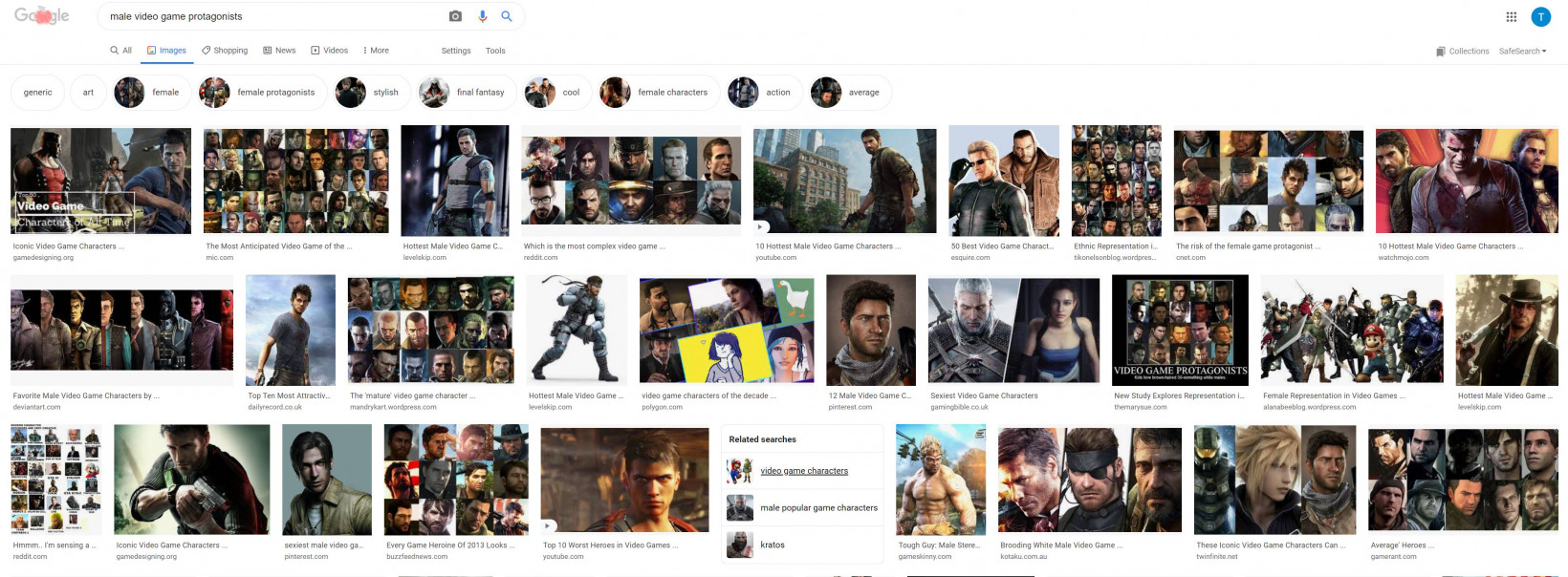

Even entertainment media is dominated by trust concerns. If you perform a Google search for “male video game protagonist” you realize that “scruffy, 30s, white guy” is who gamers trust to entertain them:

Marketing teams and corporations know this. Before it was considered an illegal hiring practice, US businesses often considered it company policy to only hire “attractive women” for customer service, secretarial, and receptionist duties.

In the wake of the 2016 elections, social media companies reevaluated how they allow data to be used and manipulated on their platforms. This, arguably, hasn’t amounted to any meaningful changes, but luckily for social media platforms the calculus behind society’s problems has changed.

Our data’s out there now. As individuals we like to think we’re more careful with what we share and how we allow our data to be used, but the fact of the matter is that big tech has been able to extract so much data from us in the past two decades that our new “normal” is a data bonanza for corporate and political entities.

The next step is for politicians to figure out how to exploit our trust as easily as they can exploit our data. And that’s a great problem for artificial intelligence.

Once politicians know what we like and don’t like, what faces we spend the most time looking at, and what we’re saying to each other when we think few people are paying attention, it’s simple for them to turn that into an actionable personality.

And the technology is almost there. Based on what we’ve seen from GPT-3, it should be simple to train a narrow-domain text generator to work for politicians. We can’t be far from a Biden Bot that can discuss policy or a Tucker Carlson-inspired AI that can debate with individuals on the internet. We’re likely to see Rush Limbaugh raised from the dead as a GOP outreach avatar in the form of an AI trained on his words.

Artificial talking heads are coming. Mark those words.

It might sound comical when you put it all into a sentence: in the future, US citizens will cast their votes based on which corporate/political AI avatars they trust the most. But, considering more than 50% of people in the US don’t trust the news media and that the overwhelming majority of us vote along strict party lines, it’s obvious that we’re rife for another socio-political shake up.

After all, five or six years ago most people wouldn’t have believed that social media manipulation could get a reality TV star who admitted he liked to “grab” women by their genitals without consent elected. Today, the idea that Facebook and Twitter can influence an election is common sense.

Get the TNW newsletter

Get the most important tech news in your inbox each week.