Since its invention in 1970, email has undergone very little changes. Its ease of use has made it the most common method of business communication, used by 3.7 billion users worldwide. Simultaneously, it has become the most targeted intrusion point for cybercriminals, with devastating outcomes.

When initially envisioned, email was built for connectivity. Network communication was in its early days, and merely creating a digital alternative for mailboxes was revolutionary and difficult enough. Today, however, it is naively easy to spoof an email and impersonate others. Last year, 70 percent of organizations reported they had become victims of advanced phishing attacks. There are 56 million phishing emails sent every day, and it takes 82 seconds on average for a phishing campaign to hook their first victim.

The problem is not lack of awareness, but rather misinformation. Many propose the DMARC email authentication protocol (short for “Domain-based Message Authentication, Reporting, and Conformance) as the solution to prevent identity spoofing. However, DMARC only works if both sides of the communication enforce it, making it only effective for inter-organization communications. This means a celebrity or known source cannot use it to prevent a hacker from impersonating them and contacting their clients. Hackers are aware of DMARC’s limitations, and while law enforcement bodies are still trying to catch up, hackers have already moved beyond SEG and DMARC, making the protocols only function as a false sense of security.

Human operators are not enough either. Hackers use Distributed Spam Distraction and polymorphic attacks to stress out operators and cloak their malicious actions. By defeating rule-based email verification systems, the agents are forced to spend tremendous amounts of time on each individual email. Clearly, some things fall through the cracks, as we witnessed with the case of John Podesta the chairman of Hillary Clinton’s presidential campaign in 2016.

What makes things worse is a recently popularized attack, called Visual Similarity attacks. Criminals create fake login pages that look identical to legitimate websites, for instance, a Gmail login page, and trick their victims into entering their credentials in these intermediate locations. This attack has tricked both human operators as well as email protection tools; humans because of the similarities, and mechanized tools because these fake pages usually live in domains with short lives and no prior history of criminal activity.

So far, only artificial intelligence has been able to cope with all the above attack scenarios.

How AI rewrites the rules of email protection

Phishing attacks are much like application-based zero-day hacks. In application-based zero-day attacks, hackers discover and exploit an unknown vulnerability in a specific application to infiltrate a system. Emails are used with various apps, but in this case, the targets are the users who are manipulated into revealing their passwords or downloading malware in a way that was never seen before. Since email users have varying levels of cyber-security knowledge, many inbox protection tools try to prevent malicious emails from reaching those users in the first place.

However, hackers are extremely creative, and as much as 25 percent of phishing emails bypass traditional Secure Email Gateways. For that reason, we need a tool that fights phishing where it is most effective: inside the mailbox.

AI has the capability to go beyond signature detection and dynamically self-learn mailbox, and communication habits. Thus, the system can automatically detect any anomalies based on both email data and metadata, leading to improved trust and authentication of email communications.

Anything predictable will be automated by AI, leaving the human worker to handle exceptional situations.

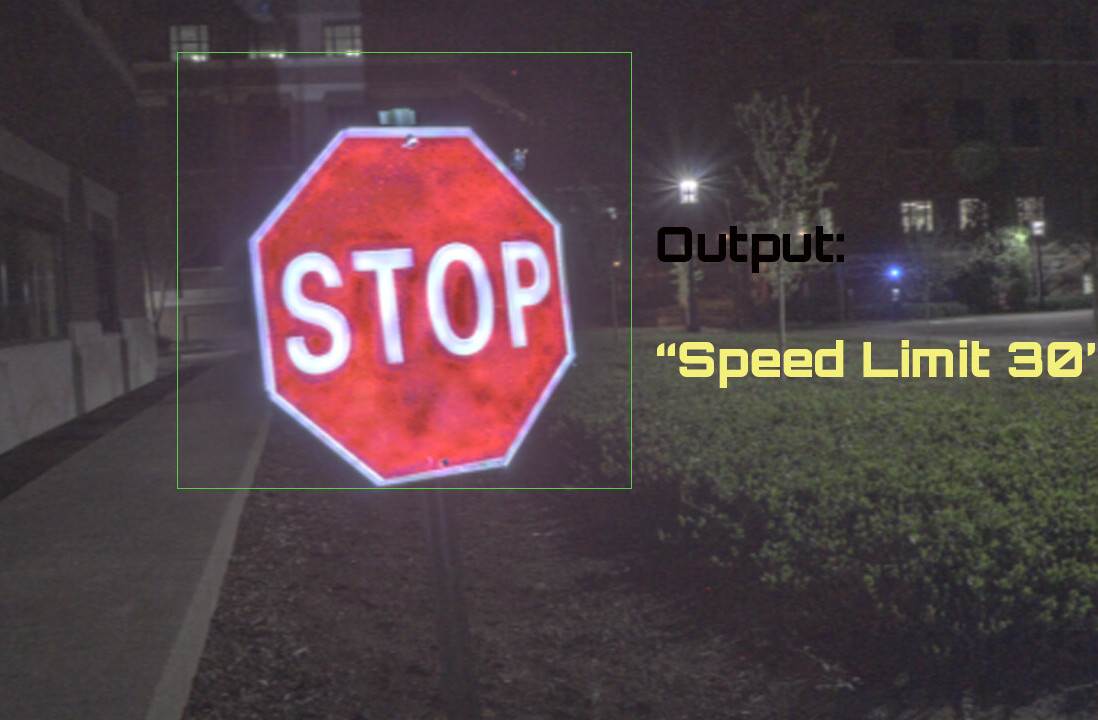

AI also can move beyond detecting blacklisted URLs. Using computer vision, the system can scan inbound links in real-time, and detect visual indications to determine whether or not a login page is fake, automatically blocking access to verified malicious URLs.

Another advantage of AI is its ability to scan disparate systems and detect patterns. Currently, cybersecurity tools such as SEG, spam filters, anti-malware, and incident response tools operate in silos, which creates a gap that hackers exploit.

It’s important to stress that AI should never be conceived as a silver bullet. Technology alone cannot stop all threats, but it can reduce the noise so human operators can make informed decisions faster. A system is only complete if it can efficiently involve humans in the loop. These operators can make the system smarter by detecting edge cases, from which the AI learns. Simultaneously, AI’s learning capabilities spares the operators from repeatedly dealing with similar incidents.

A complete AI protection system should also make it easy to empower employees with email protection toolsand make reporting suspicious cases easy. A company’s employees can sometimes be its last line of defense, as a security system is only as strong as its weakest link. By creating a democratized system for incident reporting and resolving, we can share incidents across organizations. The AI can be trained against this crowd-sourced professional community, enabling it to predict and prevent incidents in all organizations as soon as one organization has detected an attack.

Such system can defeat phishing attacks at scale. Many hackers go with a “spray and pray” attack, mass-mailing victims and hoping for someone to fall into the trap. A decentralized incident repository could gather information from many different sources and make it available to other organizations instantly, making sure the entire system becomes immune to the attack as soon as the first case is detected. And with the AI being trained on the same repository, deviations and polymorphic attacks can be automatically detected. As AI detects patterns instead of hard-wired signatures, hackers find it extremely difficult to disguise their operations.

Saving private email

We send 269 billion messages on average every day, and the era of social media and instant messaging apps have not replaced the mailbox. Email’s strength lies in its simplicity, and the ability to connect to perfect strangers. This strength is also an email’s greatest weakness when it comes to cybersecurity. As hackers have advanced their tools to orchestrate attacks, we also need systems that keep the convenience of the email while protecting the average users with little security training. AI is the perfect tool to offer this convenience, while constantly evolving and adapting to new threats and attacks.

This story is republished from TechTalks, the blog that explores how technology is solving problems… and creating new ones. Like them on Facebook here and follow them down here:

Get the TNW newsletter

Get the most important tech news in your inbox each week.