GPT-3 is arguably the world’s most advanced text-generator. It was trained using supercomputing clusters, a nearly internet-sized dataset, and 175 billion parameters. It’s among the most impressive generative AI systems ever created.

But it absolutely did not create a video game.

You may have read otherwise. But it’s what you didn’t read that matters.

Background

GPT-3, for those who aren’t in the know, is a big powerful AI system that generates text from prompts.

At the risk of oversimplifying, you give GPT-3 a short input and ask it to generate text. So, for example, you might say “What’s the best thing about Paris?” and GPT-3 might generate text saying “Paris is known for its majestic views and vibrant night life,” and it’ll keep spitting out new statements every time you ask it to generate.

GPT-3 is pretty good at generating text that makes sense. So, if you were to keep generating new phrases based on the “What’s the best thing about Paris?” input, it’s likely you’ll get a bunch of different outputs that mostly made grammatical sense.

However, GPT-3 isn’t actually checking its facts or Googling things. It doesn’t have a database of verified information that it accesses before generating and injecting its opinion into things. It just tries to imitate the text its been trained on.

Without filtering, GPT-3 is as likely to say something xenophobic about Parisians and/or the French people as it is something positive. And, most importantly, it’s just as likely to say something factually incorrect.

GPT-3 does not think. It does not understand anything. It doesn’t know what a dog is, it can’t understand the color blue, and it has no mental capacity for continuity or sense. It’s just algorithms and computer tricks.

If you can imagine 175 billion monkeys banging on 175 billion typewriters you can imagine GPT-3 at work. Except, in GPT-3’s case, instead of letters, the keys all have sentences and phrases on them. And for every monkey there’s a human standing there changing out text templates to fit specific themes.

What’s this about a video game?

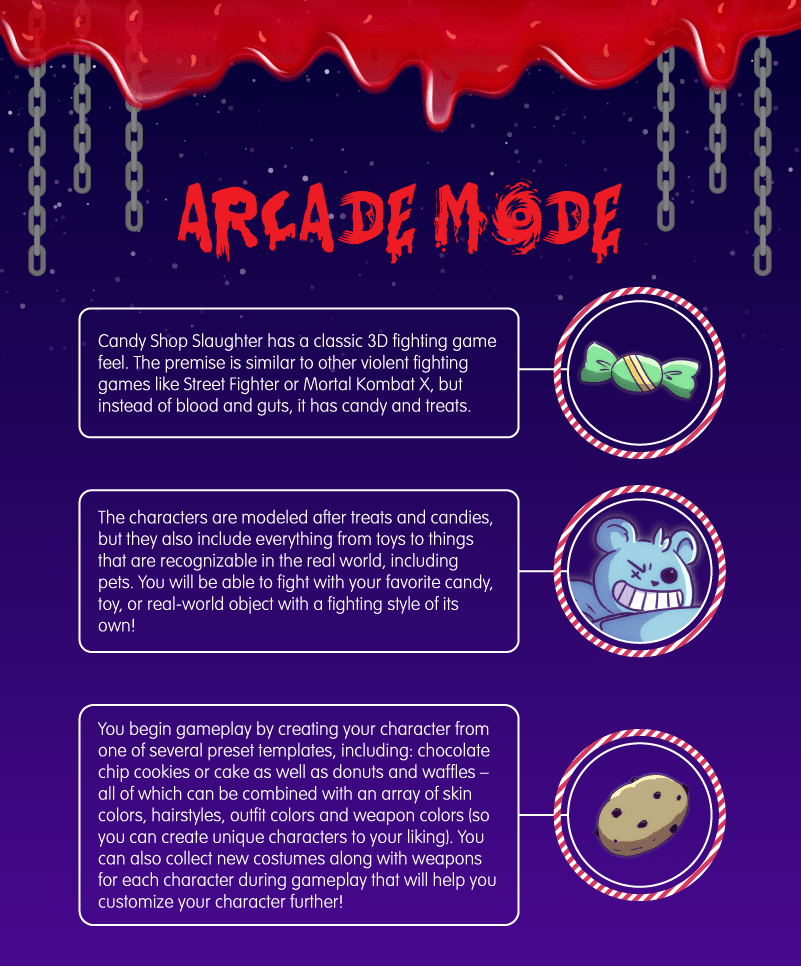

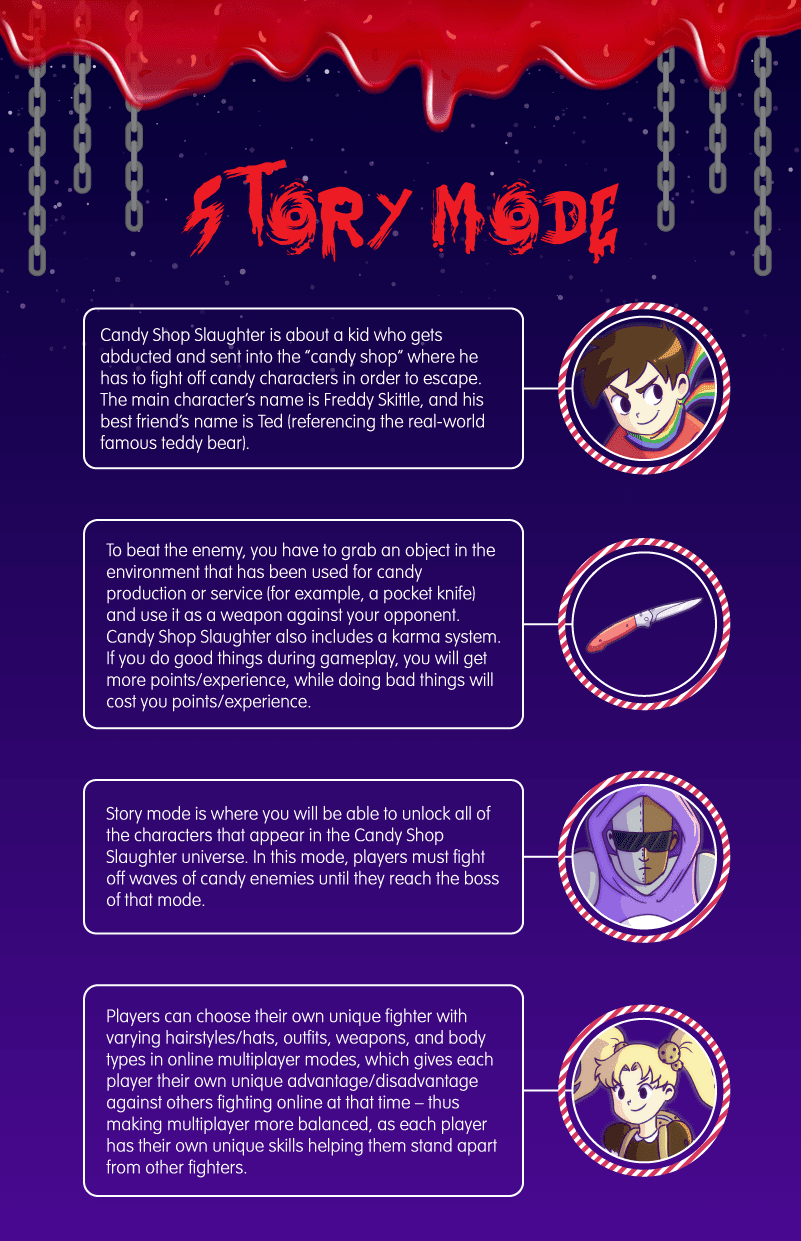

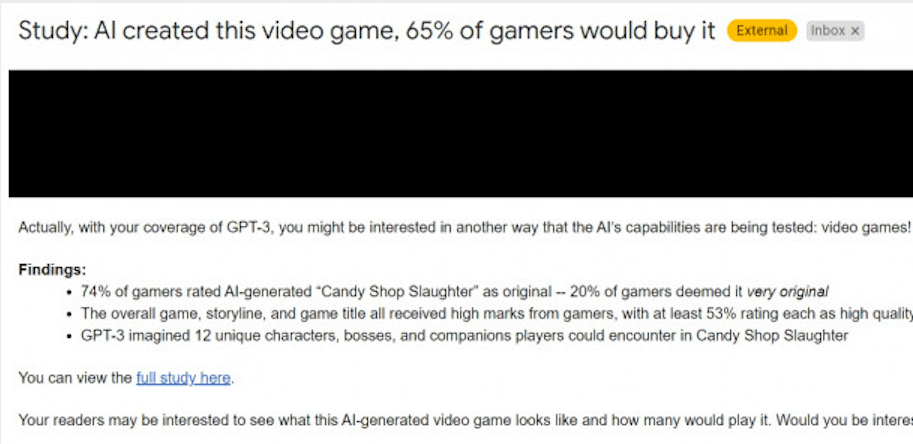

A gambling website called, aptly, “Online Roulette” started sending PR emails out a couple weeks ago claiming that GPT-3 had created a video game.

Here’s the thing: This wasn’t a pitch for a game or even an AI-related pitch. It was a pitch for a survey about how gamers responded to a PR pitch for a game that doesn’t exist that referenced text that was generated by GPT-3.

So here’s a few things to keep clear:

- The game doesn’t actually exist

- All the imagery associated with the game was created by humans

- All of the text in the PR pitch was formatted and edited by humans

I call bullshit

This isn’t to say GPT-3 can’t be involved in the development of a video game. AI Dungeon is a game that uses text-generating AI to create novel text-based game experiences. As anyone who’s played it can attest, it’s often cogent in a surreal way. But it’s just as often weird and nonsensical.

However, this marketing pitch from Online Roulette has nothing to do with the creation of an actual game.

Let’s start with the survey and work our way back. Here’s the “methodology” section on the website the original pitch refers to:

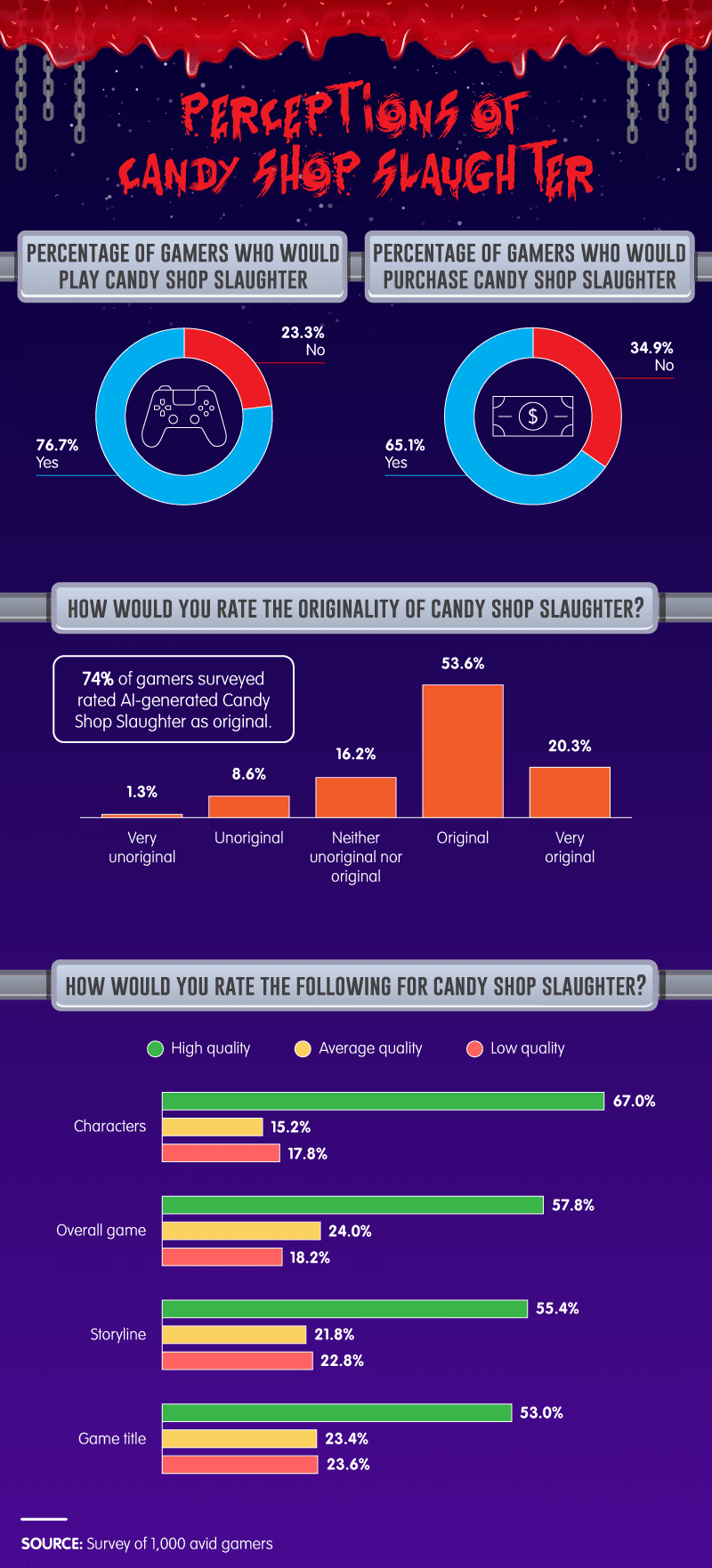

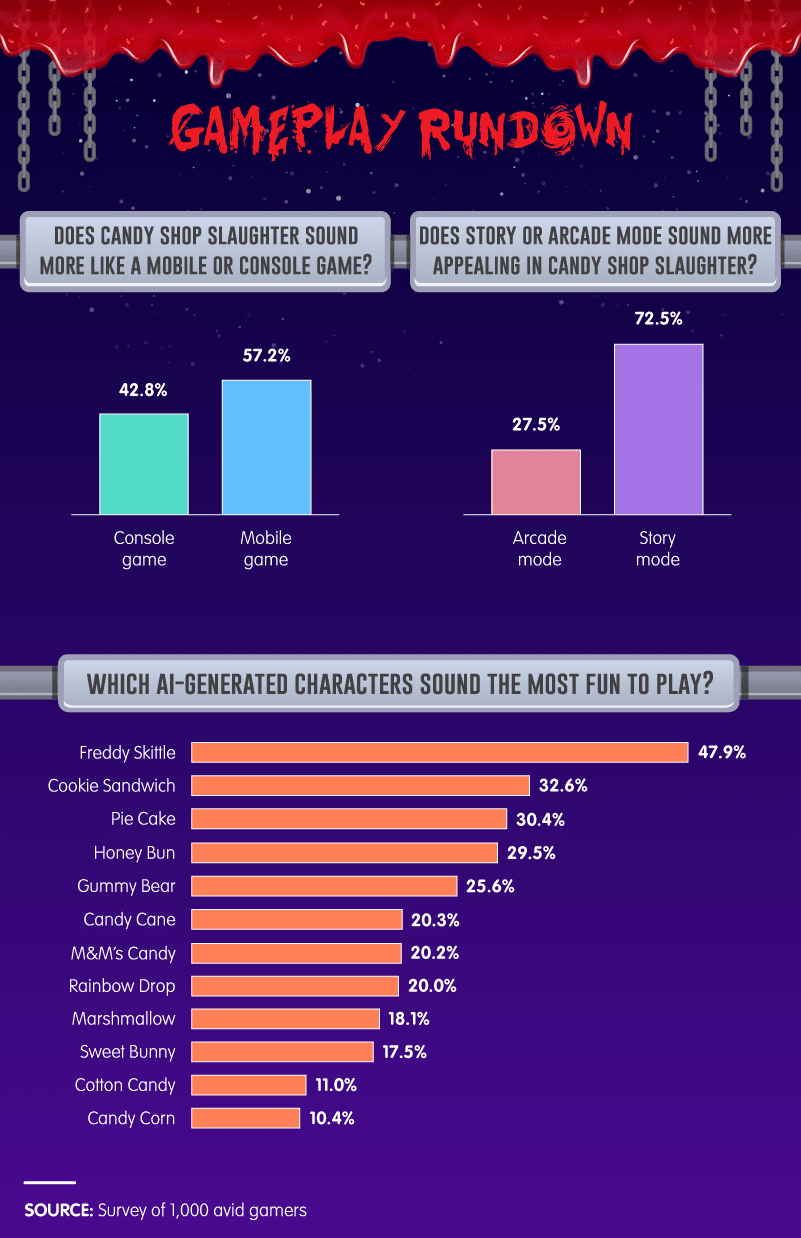

We collected results from 1,000 avid gamers. The survey was designed with the intent of having them rate the storylines and characters presented to them. Respondents were not informed that the video game, storylines, and characters were AI-generated. Video game storylines and characters were generated using GPT-3, a text-generating program from OpenAI.

Who were these “avid” gamers? Were they Mechanical Turk workers? Were they Online Roulette customers? Were they Twitter respondents? We don’t know.

What exact images and text were the respondents exposed to? Because if they were exposed to this website, the one the above images came from, they weren’t exposed to the game GPT-3 supposedly spit out.

The entire website describes a game GPT-3 allegedly generated, but nowhere is GPT-3 quoted or is it made explicit that any of the text on the site is directly attributable to GPT-3.

Exactly what did GPT-3 generate?

Why weren’t the survey respondents told they were evaluating a game allegedly generated by an AI?

The real problem

For the sake of argument, let’s say the images and text on the Online Roulette website were actually spit out by GPT-3 in the form we see them. They weren’t. But let’s just say they were.

It would be useless information.

It’s insulting that anyone would think game development and design is such a whimsical field that a machine could randomly spit out ideas that could challenge human talent.

Game developers spend lifetimes learning the industry and its fans. It takes years to gain a perspective on the $90 billion video game market. And even if you have an amazing idea, that doesn’t mean it’ll translate into a compelling game.

Nobody is sitting around waiting for a random video game pitch-generator to spark their development careers.

If coming up with a good idea was the hard part, there’d be more game developers than there are players. That’s like saying GPT-3 is a threat to Metallica because it can write random lyrics about things that are dark.

But it’s even more insulting that, according to this article, at least one person involved with generating the data actually expects us to believe the results from GPT-3 weren’t cherry-picked. But that’s a ludicrous claim.

no. GPT-3 fundamentally does not understand the world that it talks about. Increasing corpus further will allow it to generate a more credible pastiche but not fix its fundamental lack of comprehension of the world. Demos of GPT-4 will still require human cherry picking. https://t.co/6vl3ettSZk

— Gary Marcus (@GaryMarcus) August 2, 2020

The reality of the situation

We don’t know who was surveyed and we don’t know what the respondents actually saw. We also don’t know what parts of the game’s marketing pitch GPT-3 actually generated.

For all we know, the “researchers” generated results until they found something they liked and then started using prompts specific to that result to generate dozens or hundreds of options from which they then curated, arranged, and edited to go along with the images their human artists created.

Basically, Online Roulette is asking you to believe that a sketchy marketing pitch with zero details, about a survey referencing a game that doesn’t exist, highlights an example of working artificial intelligence.

The only thing impressive about “Candy Shop Slaughter” is that we’re talking about it.

Get the TNW newsletter

Get the most important tech news in your inbox each week.