Columbia University researchers have developed a robot that displays a “glimmer of empathy” by visually predicting how another machine will behave.

The robot learns to forecast its partner’s future actions and goals by observing a few video frames of its actions

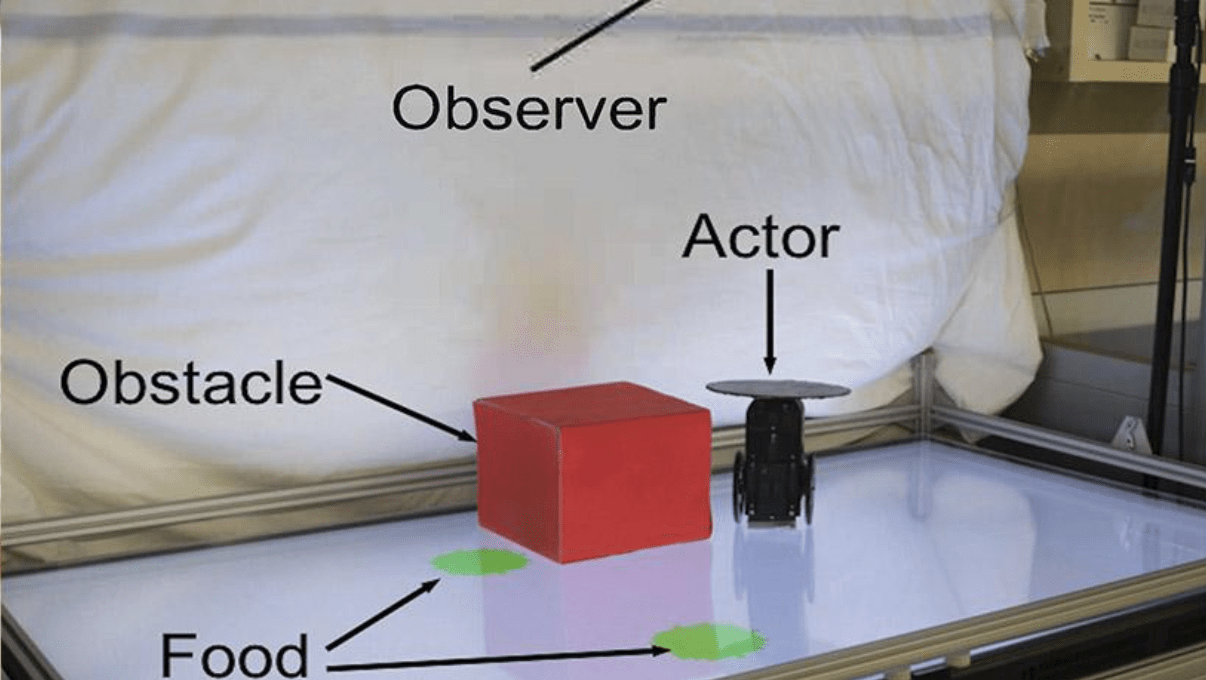

The researchers first programmed the partner robot to move towards green circles in a playpen of around 3×2 feet in size. It would sometimes move directly towards a green circle spotted by its cameras, but if the circles were hidden by an obstacle, it would either roll towards a different circle or not move at all.

After the observer robot watched the actor’s behavior for roughly two hours, it started guessing its partner’s future movements. It eventually managed to predict the subject’s goal and path 98 out of 100 times.

[Read: Meet the 4 scale-ups using data to save the planet]

Boyuan Chen, the lead author of the study, said the initial results were “very exciting:”

Our findings begin to demonstrate how robots can see the world from another robot’s perspective. The ability of the observer to put itself in its partner’s shoes, so to speak, and understand, without being guided, whether its partner could or could not see the green circle from its vantage point, is perhaps a primitive form of empathy.

The team believes their approach could help pave a towards a robotic “Theory of Mind,” which humans use to understand other people’s thoughts and feelings.

“We hypothesize that such visual behavior modeling is an essential cognitive ability that will allow machines to understand and coordinate with surrounding agents, while sidestepping the notorious symbol grounding problem,” the researchers said in their study paper.

The researchers admit that there are many limitations to their project. They note that the observer hasn’t handled complex actor logic and that they gave it a full overhead view, when in practice it would typically only have a first-person perspective or partial information.

They also warn that giving robots the ability to anticipate how humans think could lead them to manipulate our thoughts.

“We recognize that robots aren’t going to remain passive instruction-following machines for long,” said study lead Professor Hod Lipson.

“Like other forms of advanced AI, we hope that policymakers can help keep this kind of technology in check, so that we can all benefit.”

Nonetheless, the team believes the “visual foresight” they’ve demonstrated could deepen our understanding of human social behavior — and lay the foundations for more socially adept machines.

You can read their study paper in Nature Scientific Reports and find all their code and data at GitHub.

Get the TNW newsletter

Get the most important tech news in your inbox each week.