Deepfakes are being used for a range of nefarious purposes, from disinformation campaigns to inserting people into porn, and the doctored images are getting harder to detect.

A new AI tool provides a surprisingly simple way of spotting them: looking at the light reflected in the eyes.

The system was created by computer scientists from the University at Buffalo. In tests on portrait-style photos, the tool was 94% effective at detecting Deepfake images.

[Read: How do you build a pet-friendly gadget? We asked experts and animal owners]

The system exposes the fakes by analyzing the corneas, which have a mirror-like surface that generates reflective patterns when illuminated by light.

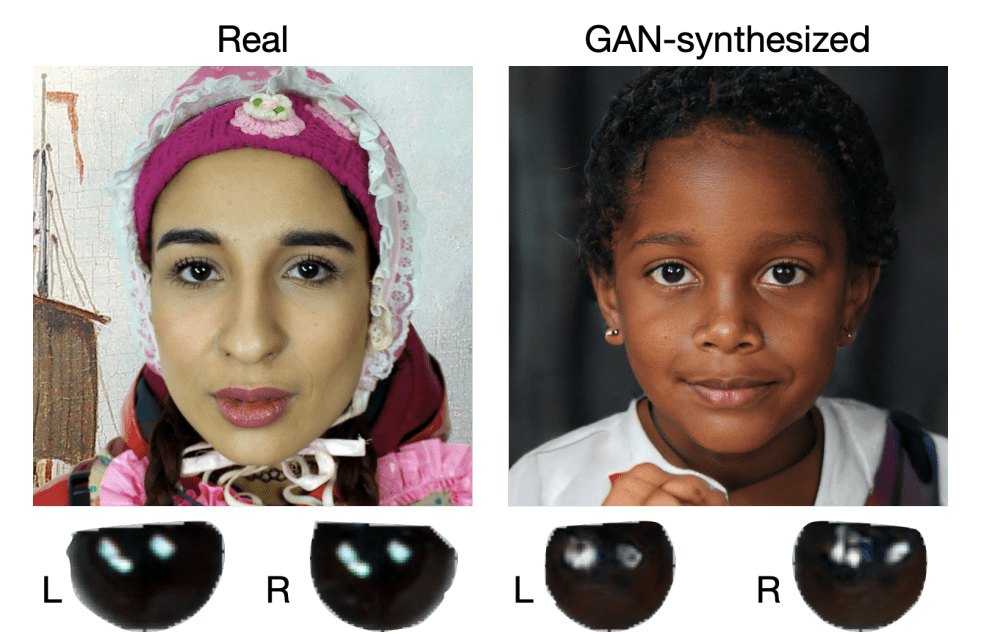

In a photo of a real face taken by a camera, the reflection on the two eyes will be similar because they’re seeing the same thing. But Deepfake images synthesized by GANs typically fail to accurately capture this resemblance.

Instead, they often exhibit inconsistencies, such as different geometric shapes or mismatched locations of the reflections.

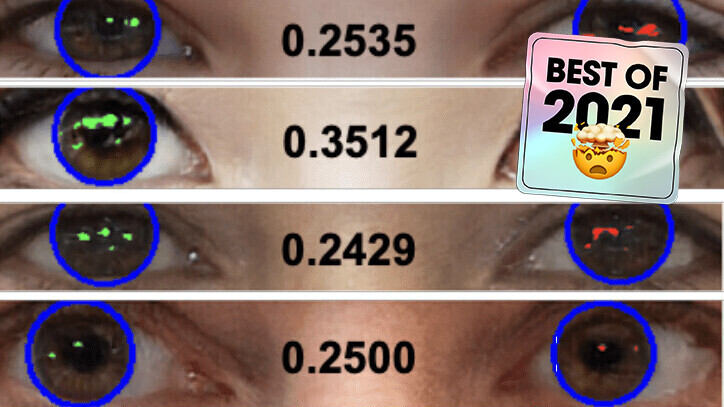

The AI system searches for these discrepancies by mapping out a face and analyzing the light reflected in each eyeball.

It generates a score that serves as a similarity metric. The smaller the score, the more likely the face is a Deepfake.

The system proved highly effective at detecting Deepfakes taken from This Person Does Not Exist, a repository of images created with the StyleGAN2 architecture. However, the study authors acknowledge that it has several limitations.

The tool’s most obvious shortcoming is that it relies on a reflected source of light in both eyes. The inconsistencies in these patterns can be fixed with manual post-processing, and if one eye isn’t visible in the image, the method won’t work.

It’s also only proven effective on portrait images. If the face in the picture isn’t looking at the camera, the system would likely produce false positives.

The researchers plan to investigate these issues to improve the effectiveness of their method. In its current form, it’s not going to detect the most sophisticated Deepfakes, but it could still spot many of the cruder ones.

You can read the study paper here on the arXiv pre-print server.

Greetings Humanoids! Did you know we have a newsletter all about AI? You can subscribe to it right here.

Get the TNW newsletter

Get the most important tech news in your inbox each week.